When I started deploying machine learning applications and first time encountered the word MLOps, then it seems like there is no difference between MLOps and DevOps. However, after reading multiple articles and research papers, it becomes clear that MLOps and DevOps are different in terms of the number of operations they perform to productionaliize the application. In DevOps, practices include continuous integration and continuous deployment that are CI/CD. MLOps talks about CI/CD and also the Continuous Training. Machine Learning is not only about ML code but also about data and models. And hence DevOps practices are not enough to productionalize Machine Learning applications. In this article, I have explained important features of MLOps and the key differences from traditional DevOps practices.

Table of Contents

1. What is DevOps – Quick Introduction

2. Software Development vs Machine Learning

4. Key Advantages of using MLOps

5. Similarities between MLOps and DevOps

DevOps – Development and Operations

In today’s competitive world, it is all about how fast you make your features available to the end user. DevOps help the project team to quickly integrate new features and make them available to the end users with the help of automated pipeline of DevOps.

DevOps uses two key component during its lifecycle:

- Continuous Integration: Merging the codebase to a central code repository like git and bitbucket, automating the build process of the software system using Jenkins and running the automated test cases.

- Continuous Delivery: Once the new features are developed, tested and integrated in the continuous integration phase, there is a need to automatically deploy them so that they are available to end users. This automated build and deploy is done in continuous delivery phase of the devops.

When the project is deployed and users start using it, Monitoring different metrics become important. Under monitoring DevOps engineer takes care multiple things like application monitoring, usage monitoring, visualizing the key metrics etc.

How Machine Learning is different from traditional Software Development

- According to the paper “Hidden Technical Debt in Machine Learning Systems” Only a fraction of a real-world ML system is composed of ML code. Along with ML code, we need to consider Data cleaning, Data Versioning, Model versioning, Continuous training of the models on new data set.

- Testing a machine learning system is different than the traditional software testing mechanism. Testing of Machine Learning application is beyond than just unit testing. We need to consider data checks and data drift, model drift, evaluating model performance deployed into production.

- Machine learning systems are highly experimental. You can not guarantee that an algorithm will work beforehand without first doing some experiments. Therefore, there is a need to track different experiments, feature engineering steps, model parameters, metrics etc to know in future that on which experiment algorithm is producing the optimal results.

- Deployment of machine learning models is very specific depending on the nature of the problem it is trying to solve. Most part of the machine learning pipeline includes things related to data. And hence Machine learning pipeline has multiple steps which include data processing, feature engineering, model training, model registry and model deployment.

- Over the period of time, model output should be consistent. That is why we need to keep a track of data distribution and other statistical measures related to data over the period of time. The live data should be similar to the data used for training the model.

- People who develop machine learning models are not focused on software practices as they are often not from a software background. They focus more on the data and model side.

What is MLOps – Machine Learning and Operations

MLOps or ML Ops is a set of practices that aims to deploy and maintain machine learning models in production reliably and efficiently. The word is a compound of “machine learning” and the continuous development practice of DevOps in the software field.

wikipedia

MLOps is the union of DevOps, machine learning, and data engineering. Built on DevOps’ existing approach, MLOps solutions are developed to increase re-usability, facilitate automation, manage data drift, model versioning, experiment tracking, continuous training and extract richer and consistent insights in a machine learning project.

Recently, Andrew Ng spoke about how the machine learning community can leverage MLOps tools to make high-quality datasets and AI systems that are repeatable and systematic. He called for shifting the focus from model-centric to data-centric machine learning development. Andrew also said that, going forward, MLOps can play an important role in ensuring a high-quality and consistent flow of data throughout all the stages of the project.

Andrew Ng

Last few years have seen drastic change in data generation rate. Almost 90 percent of the total data present today is generated during last few years only. If you are reading this post, I am assuming that it would be very clear to you that Big Data helps in developing actionable insights but at the same time poses a few challenges. Those challenges include acquisition and cleaning of large data, tracking and versioning for models, deployment of monitoring pipelines for production, scaling machine learning operations etc. That’s where MLOps can help the Machine Learning community to tackle all those challenges which can not be solved by DevOps alone.

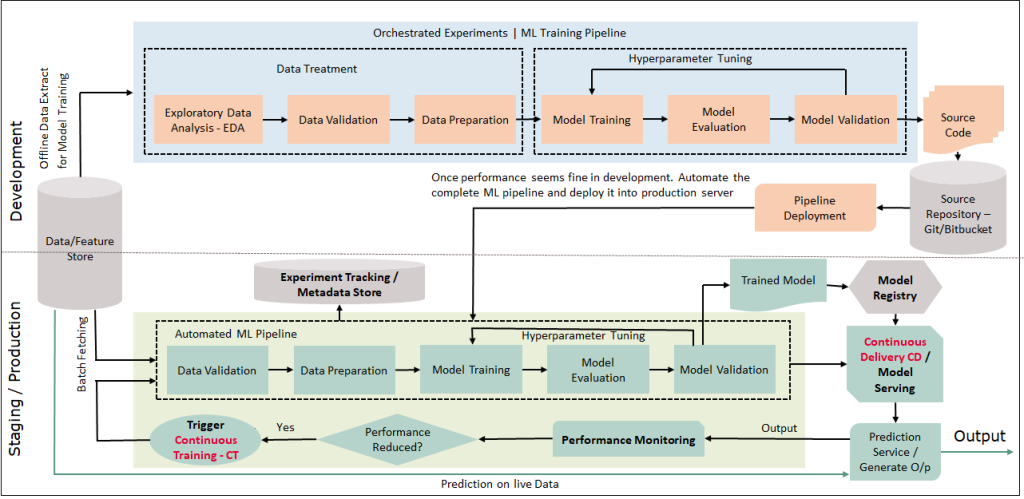

This MLOps setup includes the following components:

- Source control

- Test and build services

- Deployment services

- Model registry

- Feature store

- ML metadata store

- ML pipeline orchestrator

A more detailed architecture including the automated pipeline for continuous training is shown below:

Key Advantages of using MLOps

- Continuous Training: Using MLOps, we can setup continuous training of the models. Continuous training is very important as with time data changes and it affects the model output as well. Hence to have the consistent model output, it is required to have continuous training with the new coming data.

- Experiment Tracking: When we develop a machine learning model, we run a lot of experiments like hyper parameter tuning, different sampling data for training, different model output with respect to different parameters. So after a lot of experiments, we get the best output model. But we don’t know, which experiment produces the optimal result because we have not saved these experiments. And now if we come back few weeks later then we have to re-run the everything to get the optimal result. This is where experiment tracking helps us in recording the experiments automatically with little configuration.

- Data Drift: When an ML model is first deployed in production, data scientists are predominantly concerned with how well the model will perform over time. The major question that they’re asking is whether the model is still capturing the pattern of new incoming data as effectively as it did during the design phase? So if data is changed over time, model performance will reduce as it is trained on the data which is not the same with new coming data in terms of statistical measurements. And this change in data is known as data drift which directly affects the model performance and hence it should be taken care. There are some statistical techniques to check the data drift like Kolmogorov-Smirnov test however in MLOps provide some ready made tools which you can use for this purpose. Example: Hydrosphere and Fiddler

- Model Registry: With a model registry, you can ensure that all the key values (including data, configurations, environment variables, model code, versions, and docs) are in one place, where everyone responsible has access. It helps to version the models and faster deployments. Tools which support model registry out of the box like MLFlow, Azure Machine Learning studio, Neptune AI etc.

- Visualization: When you plot data then it is lot more self explanatory than presented in tabular numbers. That is where visualization of different machine learning metrics, performance scores, experiment becomes crucial. You can do all of those (or most of those) yourself but there are tools that you can use which helps you to speedup the machine learning development.

- Monitoring: You collect statistics on the model performance based on live data. The output of this stage is a trigger to execute the pipeline or to execute a new experiment cycle. Basically this helps to trigger the continuous training pipeline. Apart from this, there could be many more thing to monitor like usage statistics, performance monitoring, application and system level logging etc. There are various tools available for monitoring llike prometheus, opentelemetry etc.

Similarities of MLOps and DevOps

- The two main component of DevOps Continuous Integration and Continuous delivery are also needed in MLOps.

- ML Code testing is same as in DevOps. As it will be the python code where DevOps testing methodologies can be applied. [There is also model testing, data validation testing which are new to MLOps that are mentioned in next section below]

What is different in MLOps in comparison with DevOps

- Data Quality and Drift: In MLOps, in addition to testing the code, you also need to ensure data quality is maintained across the machine learning project life cycle. Make sure data is not changing over time else need to re-train the model.

- More than a traditional Deployment: In MLOps, you may not necessarily be deploying just a model artifact. You might need to deploy the complete machine learning pipeline which includes data extraction, data processing, feature engineering, model training, model registry and model deployment.

- Continuous Training: In MLOps there is a third concept that does not exist in DevOps which is Continuous Training (CT). We need to continuously check for data drift and concept drift and whenever there is a change it will impact the model performance. So if model performance is degrading over time, we need to automatically trigger the training pipeline.

- Model Testing: Only a fraction of a real-world ML system is composed of ML code. The required surrounding elements are vast and complex. Testing a machine learning system goes beyond just unit testing. We need to consider data checks and data drift, model drift, testing and validating model performance deployed into production.

- Data and Model Versioning: In DevOps, we consider code versioning, however in machine learning we deal with different sample of data and create different versions of it while training the model. Also we generate different versions of models with respect to different hyper parameters. So in MLOps there is a need to version the data as well as the model also along with code versioning.

Tools and techniques to implement MLOps

Different tools are used for different purposes under MLOps implementation. Here I am grouping tools based on what feature they provide with respect to MLOps:

1. Meta Data Storage and Experiment Tracking:

- MLflow MLflow is an open-source platform that helps manage the whole machine learning lifecycle that includes experimentation, reproducibility, deployment, and a central model registry. The tool is library-agnostic. You can use it with any machine learning library and in any programming language.

- Comet Comet is a meta machine learning platform for tracking, comparing, explaining, and optimizing experiments and models. It allows you to view and compare all of your experiments in one place. It works wherever you run your code with any machine learning library, and for any machine learning task. Comet is suitable for teams, individuals, academics, organizations, and anyone who wants to easily visualize experiments and facilitate work and run experiments.

- Neptune Neptune is an ML metadata store that was built for research and production teams that run many experiments.

2. Data Versioning

- DVC DVC, or Data Version Control, is an open-source version control system for machine learning projects. It’s an experimentation tool that helps you define your pipeline regardless of the language you use.

- Pachyderm Pachyderm is a platform that combines data lineage with end-to-end pipelines on Kubernetes.

3. Hyperparameter Tuning

- Optuna Optuna is an automatic hyperparameter optimization framework that can be used both for machine learning/deep learning and in other domains. It integrates with popular machine learning libraries such as PyTorch, TensorFlow, Keras, FastAI, scikit-learn, LightGBM, and XGBoost.

- Sigopt SigOpt aims to accelerate and amplify the impact of machine learning, deep learning, and simulation models. It helps to save time by automating processes which makes it a suitable tool for hyperparameter tuning. You can integrate SigOpt seamlessly into any model, framework, or platform without worrying about your data, model, and infrastructure – everything’s secure.

4. Run orchestration and workflow pipelines

When your workflow has multiple steps (preprocessing, training, evaluation) that can be executed separately, you will benefit from a workflow pipeline and orchestration tool.

- Kubeflow pipelines Kubeflow is open-source ML toolkit for Kubernetes. It helps in maintaining machine learning systems by packaging and managing docker containers. It facilitates the scaling of machine learning models by making run orchestration and deployments of machine learning workflows easier.

- Polyaxon Polyaxon is a platform for reproducing and managing the whole life cycle of machine learning projects as well as deep learning applications.

- Airflow Airflow is an open-source platform that allows you to monitor, schedule, and manage your workflows using the web application. It provides an insight into the status of completed and ongoing tasks along with an insight into the logs.

- Kedro This workflow orchestration tool is based on Python. You can create reproducible, maintainable, and modular workflows to make your ML processes easier and more accurate.

5. Model deployment and serving

When you think of deploying your machine learning models, the first batch of problems you hit is how to package, deploy, serve and scale infrastructure to support models in production. The tools for model deployment and serving help with that.

- BentoML BentoML simplifies the process of building machine learning API endpoints. It offers a standard, yet simplified architecture to migrate trained ML models to production. It lets you package trained models, using any ML framework to interpret them, for serving in a production environment. It supports online API serving as well as offline batch serving.

- Cortex Cortex is an open-source alternative to serving models with SageMaker or building your own model deployment platform on top of AWS services like Elastic Kubernetes Service (EKS), Lambda, or Fargate and open source projects like Docker, Kubernetes, TensorFlow Serving, and TorchServe.

- Seldon Seldon is an open-source platform that allows you to deploy machine learning models on Kubernetes. It’s available in the cloud and on-premise.

6. After Deployment | Production Model Monitoring

- Prometheus Prometheus is an open-source systems monitoring and alerting toolkit originally built at SoundCloud. Prometheus collects and stores its metrics as time series data, i.e. metrics information is stored with the timestamp at which it was recorded, alongside optional key-value pairs called labels.

- Amazon SageMaker Model Monitor Amazon SageMaker Model Monitor is part of the Amazon SageMaker platform that enables data scientists to build, train, and deploy machine learning models. When it comes to Amazon SageMaker Model Monitor, it lets you automatically monitor machine learning models in production, and alerts you whenever data quality issues appear.

- Fiddler Fiddler is a model monitoring tool that has a user-friendly, clear, and simple interface. It lets you monitor model performance, explain and debug model predictions, analyze model behavior for entire data and slices, deploy machine learning models at scale, and manage your machine learning models and datasets.

- Hydrosphere Hydrosphere is an open-source platform for managing ML models. Hydrosphere Monitoring is its module that allows you to monitor your production machine learning in real-time.

- Evidently Evidently is an open-source ML model monitoring system. It helps analyze machine learning models during development, validation, or production monitoring. The tool generates interactive reports from pandas DataFrame.

References:

mlops-continuous-delivery-and-automation-pipelines-in-machine-learning

Get new content delivered directly to your inbox.